99 AI Founders: The Missing Layer in the AI Stack

Over the past few years, I've been asking founders the same question.

Have you tried using AI agents to improve your product — and did it actually work?

The answer was surprisingly consistent:

agents produced work, but rarely moved the metric.

At a YC Founder Dinner in San Francisco

At a YC founder dinner in San Francisco, I sat down with a founder who had just closed a $1.5M round.

Their product had a friction point: users had to connect a data source before seeing their first result. A large number of new users were dropping off at exactly that step.

They did the obvious thing — they tried an AI agent.

They packaged up the onboarding page, some user feedback, and their activation metrics, handed it to the agent, and asked it to optimize the flow.

A few hours later, the agent delivered: a new headline, shorter copy, a more prominent CTA, a three-step onboarding flow, and a set of follow-up tasks ready to push to Linear.

If you only looked at the output, it almost looked like a junior product team had done the work.

They shipped it.

The numbers didn't move.

Users were still dropping off at the same step. Still asking the same question:

"Why do I need to connect my data right now?"

That's when the real problem surfaced.

Users weren't confused about where the button was.

They didn't know what they'd get after connecting. They weren't sure about the permission scope. They hadn't seen enough value yet — and they were already being asked to make a high-trust move.

What made it worse: this team had already validated a better path. Show users a sample result first. Let them understand the value before asking them to connect real data.

The agent didn't know that.

It optimized the surface of the page. Not the user's decision.

It generated a lot of output. It didn't drive any real product progress.

Not because the agent wasn't smart enough.

Because it had no product context.

It knew what the page looked like.

It didn't know why the product was stuck.

This Wasn't One Story

Across 99 cases, I noticed a pattern.

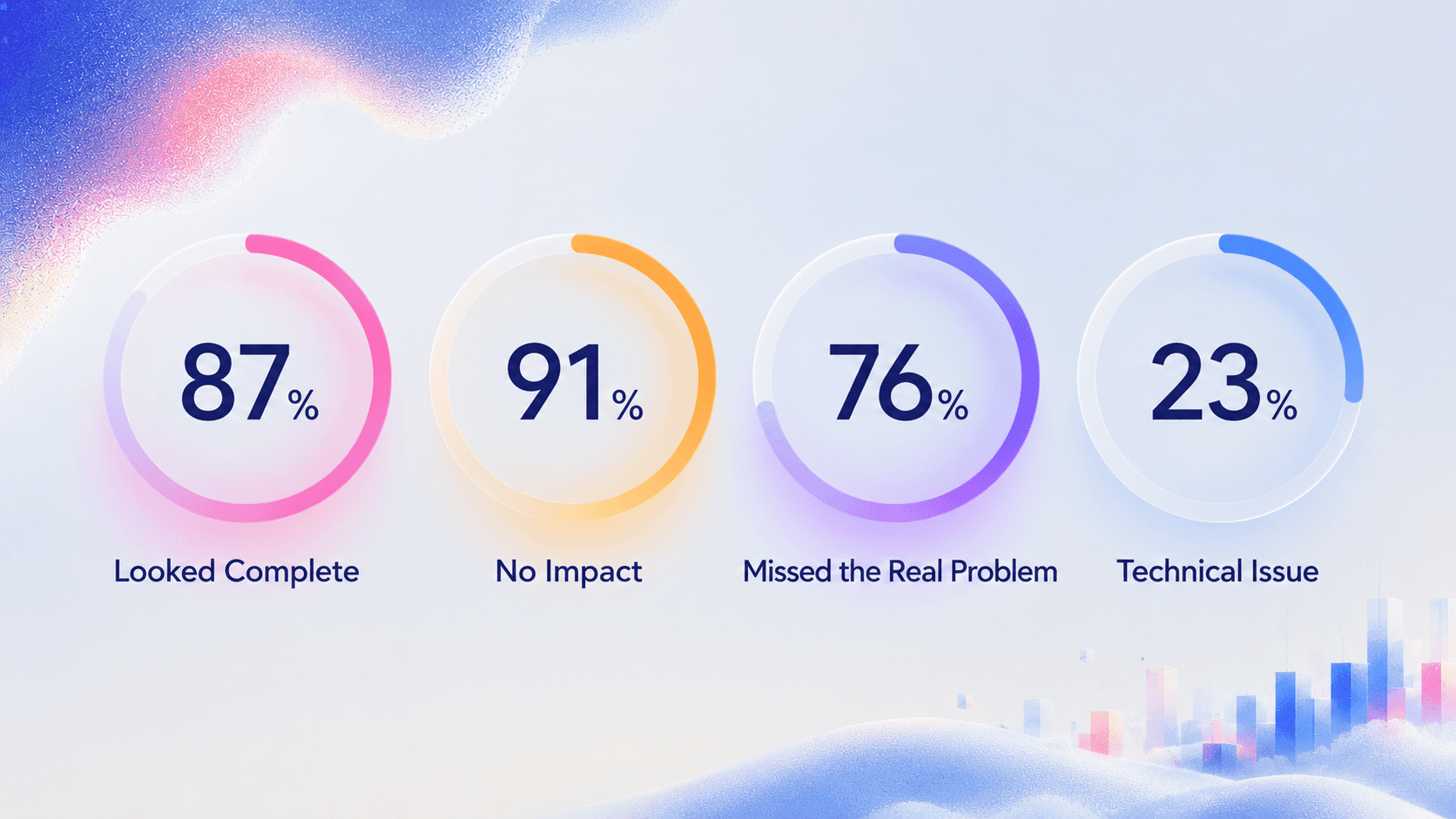

87 teams told me the agent produced something that "looked complete." Rewritten copy. Optimized flows. A stack of tasks.

91 teams said the core metrics didn't move after shipping.

76 teams realized in the post-mortem that the problem wasn't the quality of the solution — the agent simply didn't understand why the product was stuck. It optimized what it could see. The real problem was hiding in what it couldn't.

Several founders used the same phrase:

"It worked hard. It just didn't understand us."

The broader data confirms it:

88% of AI agent projects never reach production. (Digital Applied, 2026)

80% of enterprise AI projects fail to deliver intended business value. (RAND Corporation, 2025)

Only 23% of failures are caused by technical problems. The rest come down to context. (RAND / Folio3, 2025)

The models are ready. The products aren't.

99 Cases. Same Result.

The Layer Nobody Is Building

For the past three years, we've been building the AI stack from the top down.

Models keep getting stronger. Agent frameworks keep maturing. The application layer keeps expanding.

But there's one layer nobody is building.

A layer that answers a deceptively simple question:

What does this product actually know about itself?

Not what the UI looks like. Not how the code is written.

The product's behavioral history. Real user decision patterns. What the team has tried, what failed, where things got stuck — and why.

Without this layer, every agent call is like walking into a room you've never been in, glancing at the walls, and starting to repaint.

You don't know who lives there. You don't know what was changed before. You don't know what the actual problem is.

I call this the Product Context Layer. - Simon Dai, Founder at ReUX

The Product Context Layer is your product's living memory of itself.

Not documentation. Not a prompt. Not a screenshot.

It's the product's structure, real user behavioral models, live signals, and all the forgotten decisions that led to where things are today — packaged into something an agent can actually reason over.

Without it, every agent starts from zero. Every session is a fresh coat of paint on a wall nobody has looked at in years.

Back to That YC Founder

I asked him one question before we wrapped up:

"If the agent had known about the path your team had already validated — would it have delivered that same plan?"

He thought for a second.

"No," he said.

Then he said something I haven't forgotten:

"We didn't need a smarter agent. We needed a system that could make the agent understand our product."

Gartner projects that by end of 2026, 40% of enterprise applications will include AI agents. (Gartner, 2026)

Every one of them will only be as smart as what they can read.

Most products are still giving their agents nothing.

I'm building ReUX — helping product teams build their Product Context Layer. MVP launching July 2026.

If you've hit this wall, I'd love to hear about it.

date published

May 15, 2026

reading time

5 min